By March 2026, the blockchain industry has moved past the question of whether scaling matters. We know it does. But the real conversation isn't about raw transaction speed anymore; it's about trustless verification. If you cannot prove data exists without downloading the entire history, your network isn't secure. This fundamental bottleneck birthed the Data Availability Layer (DAL), the backbone holding our modern modular architectures together.

Think back to 2023, before Proto-Danksharding rolled out broadly. Back then, every single node had to store every single byte of transaction history to stay in sync. That approach capped throughput. Now, nearly three years later, we have dedicated systems that let us publish data without forcing full storage. Let's break down how this specific infrastructure piece transformed the landscape and how you should leverage it today.

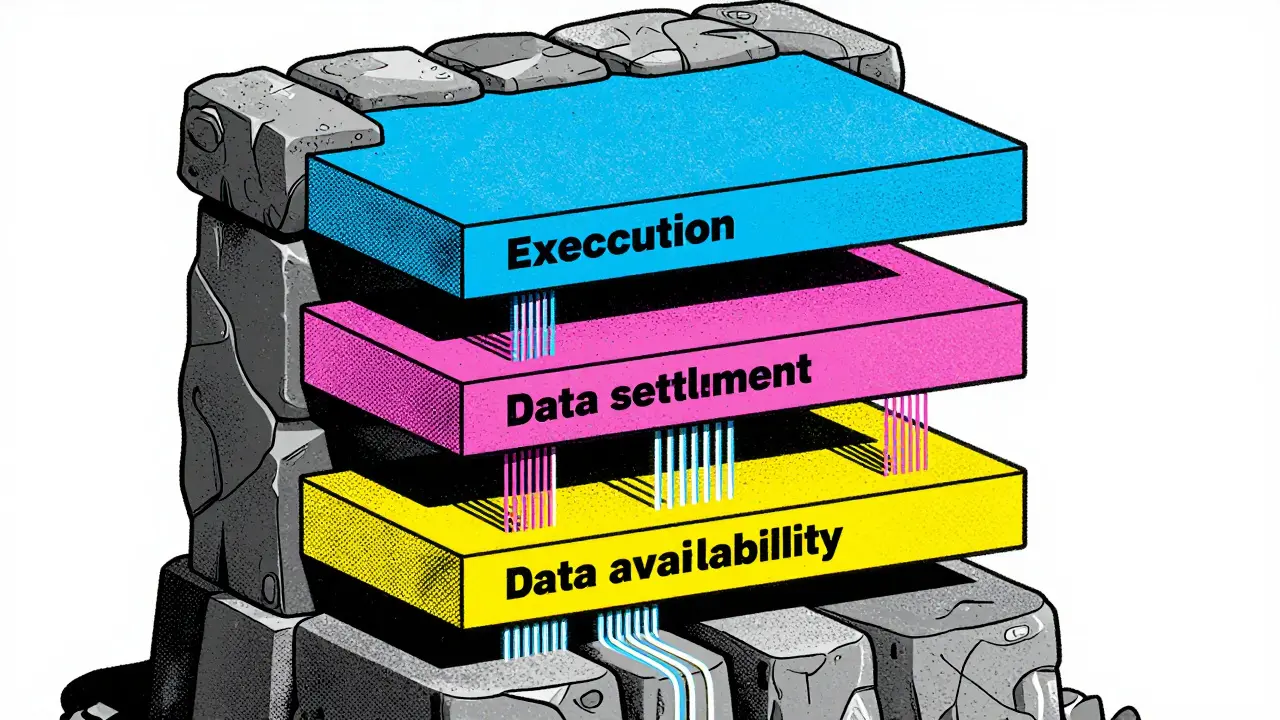

The Core Function: Separating Storage from Execution

In a monolithic setup-like early Ethereum or Bitcoin-your security comes from everyone checking everything. It creates a hard limit. You can't process more transactions than the slowest validator can handle while storing them all. The Data Availability Layer solves this by unbundling responsibilities. It guarantees that the data required to execute transactions is present, available, and verifiable, even if no one stores the whole thing locally.

This separation allows execution environments (rollups) to run fast. They offload the heavy lifting of storage to the DAL. Without a DAL, a rollup can't prove it executed a batch correctly because users couldn't download the batch inputs. With a DAL, the proof becomes trivial. You sample a small fraction of the data, and math tells you the rest is there.

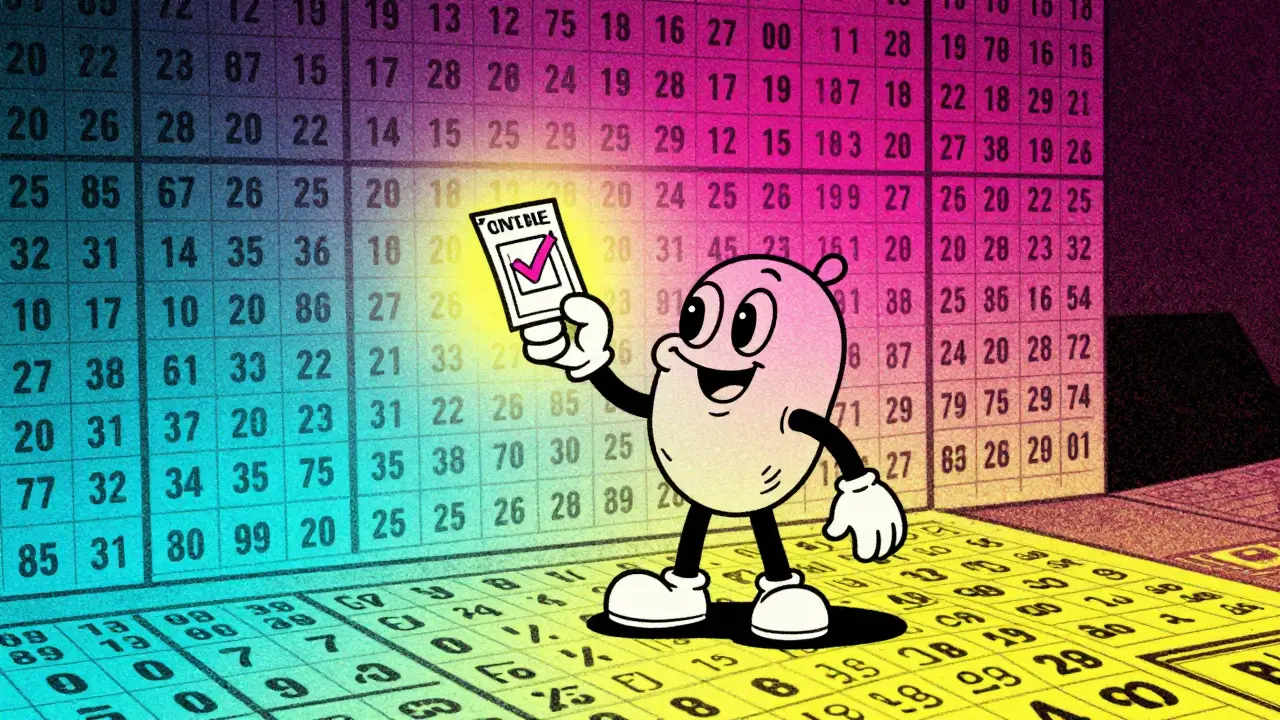

How Verification Works Without Full Downloads

You might wonder how light clients can trust a massive dataset without fetching gigabytes of information. The answer lies in Data Availability Sampling. It sounds complex, but the principle is simple probability. Imagine a lottery ticket. If someone claims they hold 1,000 tickets, they don't show you all 1,000. They just let you check ten random spots. If ten out of ten are valid, statistical certainty proves the rest exist.

To make this mathematical guarantee robust, developers utilize Erasure Coding. This technique expands your original data. If you submit 1 MB of information, the system encodes it into 2 MB of fragments spread across validators. To reconstruct the original file, you only need 50% of those fragments. This ensures that even if half the network goes offline, the data remains accessible. This mechanism underpins almost every modern implementation we see in 2026.

Cryptographic proofs also play a vital role. KZG Polynomial Commitments allow validators to verify data properties instantly. Instead of re-hashing blocks, nodes verify a commitment signature that corresponds to the data's availability. This innovation, heavily researched since 2020, reduced the overhead of verifying availability significantly.

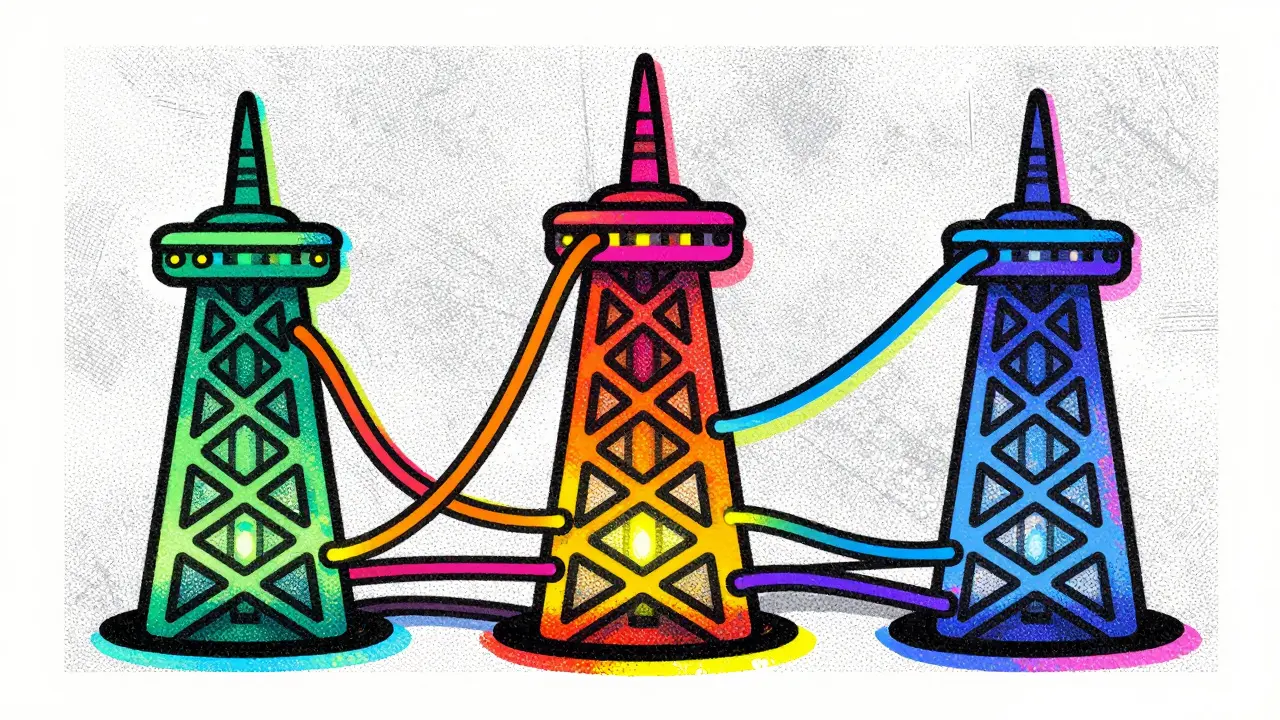

Architecture Choices: On-Chain vs. Dedicated Networks

Choosing where your data lives depends entirely on your trade-offs between security and cost. Currently, there are two dominant models in the ecosystem.

The Integrated Approach (Ethereum):

Ethereum treats its main chain as the primary source of truth. When EIP-4844 went live in 2024, it introduced "blobs" specifically for rollup data availability. This keeps the security inheritance of Ethereum's consensus but adds a dedicated space for data. As of late 2025, costs dropped roughly 90% compared to pre-danksharding levels, making L2 rollups viable for high-frequency trading.

The Dedicated Approach (Celestia, EigenDA):

Separate chains built solely for data avoid the congestion of general computation layers. Celestia, which hit mainnet a few years ago, focuses exclusively on availability. This means developers get lower fees and higher throughput, sometimes exceeding 1 MB per second. However, they sacrifice the immediate security inheritance of a giant like Ethereum, relying instead on their own distinct validator set.

| Feature | Ethereum (Post-EIP-4844) | Celestia (Dedicated) | EigenDA (AVS) |

|---|---|---|---|

| Security Model | Ethereum Beacon Chain | Native Proof-of-Stake | Shared Security (EigenLayer) |

| Estimated Cost | Low (~$0.01) | Very Low (<$0.005) | Ultra-Low (Sub-$0.001) |

| Throughput Cap | ~100KB per slot | Dynamic (Megabytes) | Scales with Validators |

| Best For | High-value Assets | App-Specific Chains | Large Scale Indexers |

Ecosystem Maturity and Tooling Gaps

While the theory is solid, the engineering reality in 2026 still faces friction. When EIP-4844 launched, many toolchains struggled to read blob calldata efficiently. Libraries needed updates to parse the new formats correctly. Similarly, Cosmos-based rollups utilizing Celestia faced a learning curve due to differences in the SDK versus standard Ethereum Virtual Machine compatibility.

A survey from 2025 showed that 68% of teams saw scalability improvements after switching to a DAL-backed modular stack. However, 52% cited "mature tooling" as a lingering barrier. Debugging sampling failures, configuring encoding parameters, and handling cross-chain data retrieval require specialized knowledge.

For enterprise deployments, regulatory compliance became another factor. The EU's MiCA framework, fully enforced by 2024, requires verifiable data availability for transaction records. This inadvertently accelerated adoption of DALs among financial institutions, ensuring public auditability without exposing sensitive private keys.

Strategic Implementation Guide for 2026

If you are building a new project today, your decision matrix relies on risk tolerance.

- Choose Ethereum Blobs if: You prioritize maximum security. Your asset is high value, and users expect Ethereum-grade protection. The slight cost premium is worth the safety net of thousands of global validators.

- Choose Celestia or similar if: You need extreme scale. Think gaming or social media where individual transactions carry low value but volume is massive. The fee savings here are substantial.

- Choose EigenDA or Restaked DA if: You want flexibility without spinning up your own chain. Leveraging existing liquid staking assets reduces capital requirements, though it introduces smart contract reliance.

Keep in mind interoperability. In late 2023, there were fears about walled gardens. Fortunately, standardization efforts have matured. Most DALs support generic interfaces for reading headers. You can often build a bridge that reads availability proofs from Celestia and executes on Ethereum, giving you the best of both worlds.

Why do we need a separate layer for data?

We separate layers because monolithic blockchains force every validator to store everything, creating a bottleneck. A separate layer lets validators only check if data is present, not store it all, enabling faster networks.

Is data sampling secure?

Yes, provided the parameters are set correctly. Statistical probability ensures that if a large enough random sample is verified, the remaining hidden data exists with near 100% certainty.

What are blobs in Ethereum?

Blobs are temporary storage containers introduced via EIP-4844. They allow rollups to post data cheaper and faster on Ethereum without bloating the permanent state.

Does Celestia compete with Ethereum?

Not directly. Celestia focuses only on data availability, whereas Ethereum handles settlement, execution, and consensus. They often complement each other in hybrid setups.

What is the biggest risk of using a DAL?

The primary risk is centralization of the validator set. If too few validators control the data, they could censor or withhold availability. Always check the decentralization metrics before choosing.

Sean Carr

April 1, 2026 AT 06:59The separation logic discussed here is critical. Many teams still conflate execution environments with storage constraints during implementation phases. We see this pattern repeat when rollups attempt to onboard without verifying their DAL connectivity first. It creates unnecessary friction later in the dev cycle. Focus on the blob verification handshake early. That saves weeks of debugging latency issues downstream. Just my two cents before you dive too deep.

Callis MacEwan

April 2, 2026 AT 07:11People are completely misinterpreting the security implications here regarding shared state validation. You claim sampling provides statistical certainty but ignore the adversary capability to manipulate the commitment scheme itself. In a truly adversarial environment, probability isn't enough without robust economic disincentives built into the slashing conditions. Most developers skip this layer entirely because it feels redundant if they trust the validator set anyway. But relying on trusted validators defeats the entire purpose of having a decentralized ledger system initially. We need to discuss the actual failure modes of erasure coding when network partitions occur unexpectedly. If the threshold for reconstruction drops below 50 percent then data loss becomes imminent regardless of the math. Furthermore, the assumption that KZG polynomial commitments remain quantum resistant is a massive gamble right now. Post-quantum cryptography hasn't been integrated into the standard SDK yet for most of these layers. Until then, your availability guarantees are effectively temporary solutions rather than permanent fixes. Security inheritance is another red herring frequently marketed by L2 projects seeking cheap funding. They imply Ethereum mainnet secures everything while offloading the heavy lifting to weaker consensus mechanisms. Celestia validators might have less stake compared to beacon chain participants overall. This introduces a centralization vector that the article barely touches upon with any meaningful depth. We are building sandcastles on the assumption that incentive structures never change over time. It is naive to think market forces won't target these specific weak points eventually. The industry needs to focus on true decentralization metrics instead of just claiming scalability wins.

Alex Kuzmenko

April 4, 2026 AT 02:00Hey there, i get what u mean bout the quantum risks tho. Its pretty scary if those algos break later on. But dat probablity stuff helps keep us safe right now till we fix it. Thanks for pointing out the staking difference on celestia though. I forgot that part was diff from ETH mainchain. U r right bout the market forces attacking weak spots too. Will keep that in mind when deploying next quarter.

Elizabeth Akers

April 5, 2026 AT 17:25honestly the tooling gaps are the real bottleneck people dont talk enough about. everyone wants the shiny new tech but nobody wants to debug the parsing libraries for blobs. its just so annoying when docs say compatible but its not actually compatible with the sdk. hope we see better support soon cause im tired of manual workarounds for reading headers.

Alex Lo

April 5, 2026 AT 20:02Yeah totally agree with u on that front i spent three weeks last month stuck on a parser issue and it was the worst experience ever. The library docs were outdated and the community discord was dead so no help came through to me. Then i realized i had to recompile the node from source code which took forever on my old laptop setup. I feel like this is why adoption slows down for small teams who dont have big budgets for engineering staff. Every time there is a minor update to the protocol someone has to rewrite the client logic and thats exhausting for devs. But once you get past that hurdle the performance gains are absolutely worth the initial pain honestly. Imagine processing transactions at sub cent fees while maintaining full verifiability of data integrity. It really does feel like magic when it finally clicks and starts working properly without errors. Just wish the ecosystem moved faster on standardizing these tools across all different chains so we dont duplicate effort constantly. Maybe cross chain compatibility will improve by next year though so keep pushing forward everyone!

Matt Bridger

April 6, 2026 AT 20:29The discourse surrounding infrastructure maturity remains superficial at best. Few understand that enterprise deployment requires more than consumer grade availability. Regulatory frameworks like MiCA demand precision not speculation about future upgrades. One must consider the audit trails required for institutional grade financial instruments operating on such rails. Most participants lack the requisite knowledge to evaluate risk properly.

Tiffany Selchow

April 7, 2026 AT 21:51All this fancy math means nothing if the american regulators shut it down tomorrow.